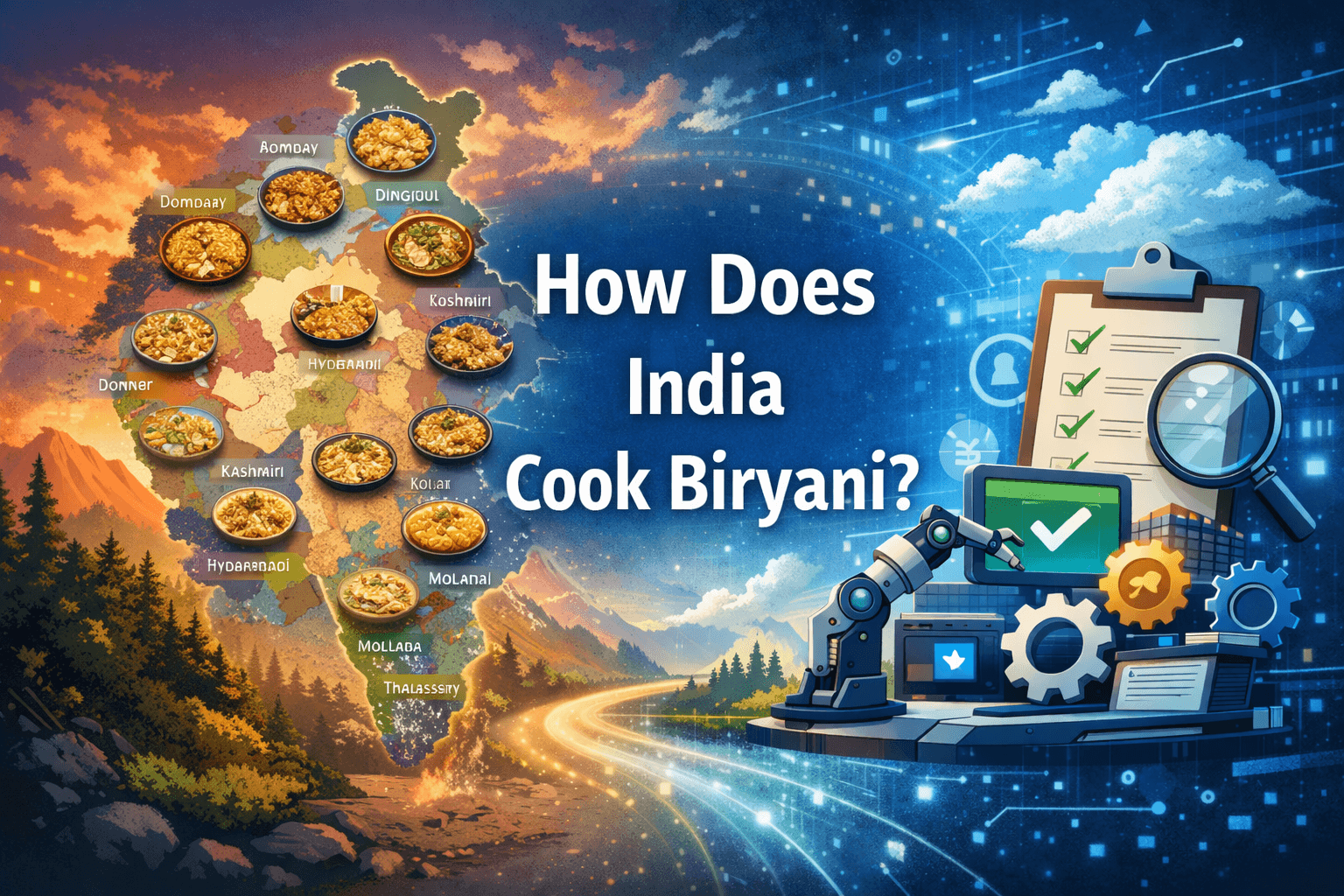

How Does India Cook Biryani?

With nearly three decades of experience in technology consulting, community building, and open-source advocacy, I am passionate about empowering individuals and organizations to thrive in the digital age.

As the Founder of AFROST and a co-founder of PyDelhi.org, I've built and led high-impact tech communities and organized marquee events like PyCon India, directly supporting the growth of India's open-source ecosystem.

My expertise spans Linux, Python, Kubernetes, AI, and DevOps, and I have partnered with leading organizations such as Red Hat, Cadence, and IIIT. I take pride in having mentored and trained over 50,000 professionals, helping them launch successful careers and tech startups.

I believe in the power of collaboration, open knowledge, and inclusive leadership. If you share a passion for technology, open source, or building impactful communities, let’s connect and explore how we can make a difference together.

Today i don’t want to talk about ML/AI there has been lots of said and the Internet is full of the buzz , December 17-20 IIIT Hyderabad Team presented a paper with the same name as the title of this blog and it went Viral for the eye catchy and easy title anyway the paper is for real and its a comprehensive study of biryani preparation videos across India, highlighting regional diversity and procedural differences.

Introduction to Biryani Diversity

Biryani is a culturally significant dish in India, showcasing diverse regional variations in preparation, ingredients, and presentation.

The study aims to systematically analyze these variations using computational tools, particularly through online cooking videos.

The document emphasizes the need for advanced video understanding methods to capture fine-grained differences in cooking processes.

Dataset Creation and Methodology

A curated dataset of 120 high-quality YouTube videos was compiled, representing 12 distinct regional biryani styles.

The dataset includes videos from regions such as Ambur, Hyderabadi, Kolkata, and others, showcasing authentic cooking practices.

The methodology involves a multi-stage framework that segments videos into procedural units and aligns them with audio transcripts and canonical recipes.

Video Segmentation and Alignment

The framework utilizes vision-language models (VLMs) to extract annotations of actions, ingredients, and utensils from video segments.

Each segment is processed to improve temporal coherence, merging consecutive segments with the same action.

A heatmap is generated to visualize the alignment between recipe steps and video transcripts, indicating semantic similarities.

Video Comparison Framework

A video comparison pipeline is introduced to analyze procedural differences between various biryani recipes.

The framework identifies and visualizes differences in cooking actions, ingredients, and techniques used across different biryani styles.

Results indicate that 33.2% of action comparisons reveal meaningful differences, highlighting the unique characteristics of each biryani variant.

Question-Answering Benchmark Development

A comprehensive question-answering (QA) benchmark was constructed to evaluate procedural understanding in VLMs.

The QA dataset includes three difficulty tiers: easy (segment-level), medium (whole video comprehension), and hard (multi-video reasoning).

The dataset aims to assess models' abilities to reason about cooking processes, ingredient usage, and procedural flow.

Evaluation of Vision-Language Models

Several state-of-the-art VLMs were benchmarked on the QA dataset, revealing performance differences between zero-shot and fine-tuned settings.

Fine-tuned models, particularly Llama-3.2, outperformed zero-shot models, especially on medium and hard questions.

The evaluation metrics included BLEU, ROUGE-L, and BERTScore, indicating the models' semantic alignment and reasoning capabilities.

Applications and Future Directions

The study opens avenues for skill-based video retrieval, allowing users to search for specific cooking techniques across videos.

Potential applications include educational tools and cooking assistants that provide contextual assistance during cooking.

Future work may expand the dataset to include other culturally significant dishes and improve alignment robustness in noisy narration contexts.